Table of Content

- Why Is Your Cloud Bill So High?

- The 6 Root Causes of Cloud Waste

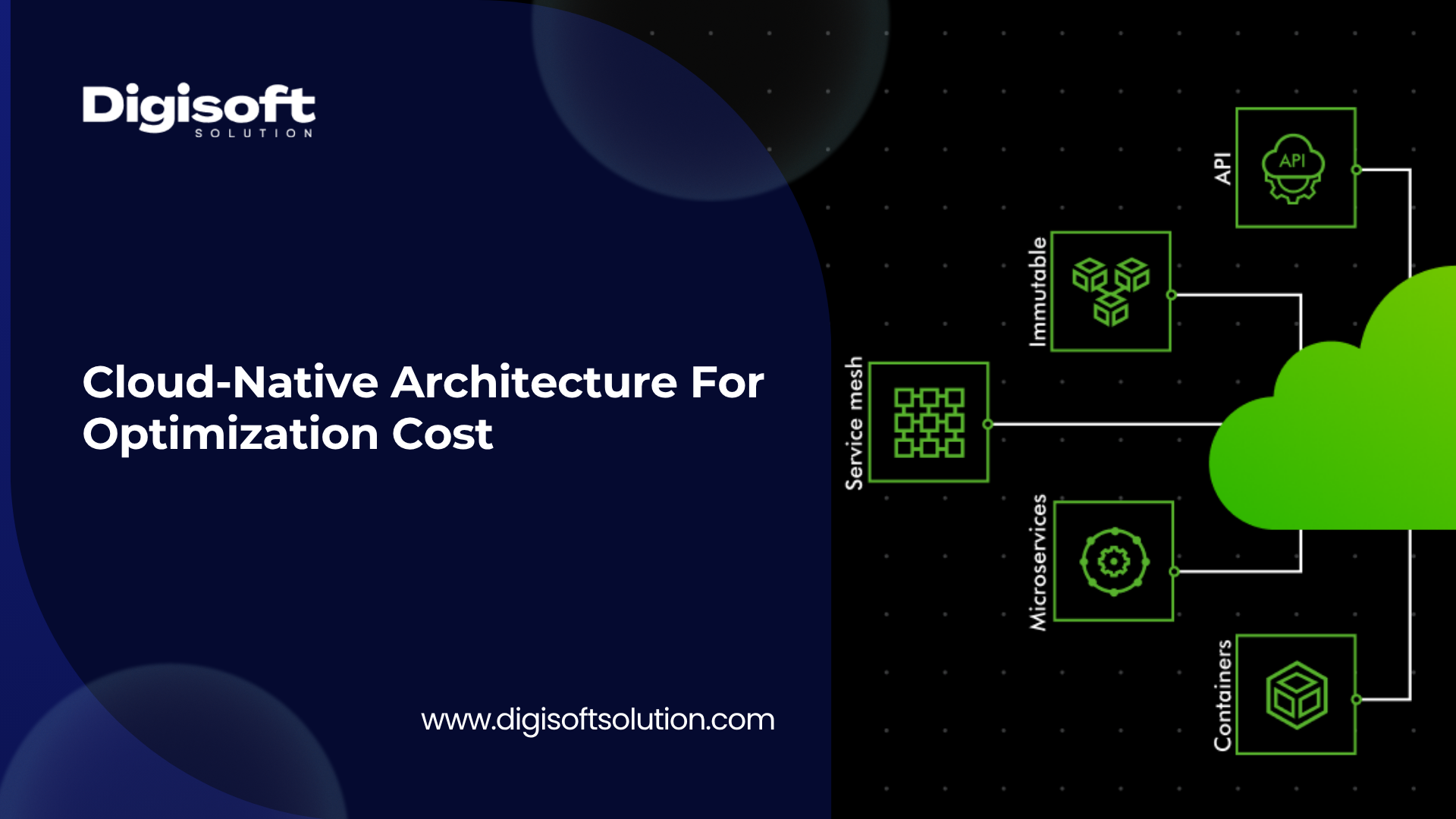

- What Is Cloud-Native Architecture?

- The 5 Core Building Blocks

- Cloud-Native vs Traditional Architecture

- How Cloud-Native Architecture Cuts Your Cloud Costs

- 1. Autoscaling

- Real Example

- 2. Microservices

- 3. Serverless Computing

- 4. Infrastructure as Code

- 5. Smart Instance Purchasing

- FinOps Integration

- What It Does

- Core Practices

- AI-Powered Automated Optimization

- What It Does

- How It Works

- Egress Costs

- Toolchain Sprawl

- Snapshot Creep

- The Lift-and-Shift Trap

- Wrong Region Pricing

- Security Retrofitting

- Phase 1: Visibility (Weeks 1 to 2)

- Goal

- Actions

- Expected Outcome

- Phase 2: Containerize (Months 1 to 2)

- Goal

- Actions

- Expected Outcome

- Phase 3: Microservices and Serverless (Months 3 to 6)

- Goal

- Actions

- Expected Outcome

- Phase 4: Automate and Govern (Ongoing)

- Goal

- Actions

- Expected Outcome

- Digisoft Solution for Cloud-Native Architecture

- What Digisoft Solution Does for Cloud-Native

- Cloud-Native Application Development

- Infrastructure as Code and DevOps

- DevSecOps and Security-First Architecture

- Legacy Application Modernization

- AI and Intelligent Automation

- Managed Services and Continuous Optimization

- The Cloud-Native Cost Optimization Toolchain

- Cost Visibility Tools

- AWS Cost Explorer

- Azure Cost Management

- Google Cloud Cost Management

- Infrastructure and Orchestration Tools

- Terraform

- Kubernetes with HPA

- AWS Lambda, Azure Functions, Google Cloud Functions

- Automated Optimization Tools

- CAST AI

- Spot.io by NetApp

- AWS Graviton and Azure Ampere ARM Instances

- Observability Tools

- Datadog

- New Relic

- Prometheus and Grafana

- What Your Business Actually Gains

- Cost Reduction

- Deployment Speed

- Security Posture

- Scalability

- Operational Efficiency

- Cost Transparency

- Frequently Asked Questions

- Is cloud-native only for large enterprises?

- Do we need to rewrite our entire application?

- How does cloud-native handle multi-cloud or hybrid environments?

- How quickly will we see real cost savings?

- What is the biggest risk of going cloud-native?

- Which cloud provider is best for cloud-native?

- What if our team does not have cloud-native expertise?

Digital Transform with Us

Please feel free to share your thoughts and we can discuss it over a cup of coffee.

By the numbers:

30% average cloud overspend across businesses (FinOps Foundation, 2025)

45% cost reduction possible through serverless adoption (IEEE, 2025)

75% discount on Reserved Instances vs. on-demand pricing

90% savings possible with Spot Instances for batch workloads

50%+ savings delivered by automated cloud optimization tools (CAST AI, 2025)

$723B global cloud spend projected in 2025 (Gartner)

Why Is Your Cloud Bill So High?

The promise of the cloud is simple: pay only for what you use. The reality for most businesses is far more expensive. Teams overprovision servers to handle peak loads, forget about idle resources, and have no real visibility into where money is going. By the time the invoice arrives, the damage is done.

According to the FinOps Foundation, 42% of CIOs and CTOs now rank cloud waste as their number one operational challenge. One company famously received a $65 million cloud bill in a single quarter, all from unmanaged resource sprawl.

The 6 Root Causes of Cloud Waste

- Overprovisioned servers: Teams size infrastructure for peak demand and leave it running 24/7. A server at 10% utilization costs exactly the same as one at 90%.

- Monolithic architecture: When one service spikes in a monolith, you must scale the entire application, wasting resources on every other component.

- No cost visibility: Without resource tagging and real-time dashboards, teams cannot see where money goes. You cannot optimize what you cannot measure.

- Manual operations: Human-managed scaling is reactive and slow. By the time an engineer notices a traffic spike, the billing meter has been running for hours.

- Orphaned resources: Developers spin up servers, databases, and load balancers for software testing and forget to shut them down. Old snapshots multiply silently.

- Shadow IT and sprawl: Teams provision cloud resources outside central governance. No tagging, no ownership, no accountability, and no way to stop the bleed.

Cloud waste is not a billing problem. It is an architecture problem. The only permanent fix is building systems that are designed from the start to use resources efficiently.

What Is Cloud-Native Architecture?

Cloud-native is not the same as being in the cloud. Most businesses move their existing applications to cloud servers without redesigning them. That strategy is called lift-and-shift and delivers almost none of the cloud efficiency benefits while still costing cloud prices.

Cloud-native means designing and operating applications from the ground up to exploit what cloud infrastructure actually offers: elasticity, automation, distributed resilience, and pay-per-use pricing. Every component scales up instantly, scales down to zero when idle, and recovers automatically from failure.

The 5 Core Building Blocks

1. Microservices: Break the application into small, independent services. Each does one job. You scale only the component that needs it and fix only the part that breaks.

2. Containers (Docker): Package each service with all its dependencies so it runs identically anywhere. No environment mismatch, no wasted compute, and easy portability across clouds.

3. Container Orchestration (Kubernetes): Automatically schedules, scales, and heals containers. Bin-packs workloads onto fewer nodes so you never pay for idle server capacity.

4. Serverless Functions: AWS Lambda, Azure Functions, and Google Cloud Functions run code only when triggered, billed per 100 milliseconds of execution. Zero idle cost.

5. Infrastructure as Code: Terraform, AWS CloudFormation, and Pulumi define your infrastructure in code. Provision exactly what you need every time with no human guesswork.

Cloud-Native vs Traditional Architecture

- Scaling: Traditional: buy a bigger server. Cloud-native: automatically add instances under load and remove them when demand drops.

- Cost Model: Traditional: pay for fixed capacity upfront. Cloud-native: pay per use and scale to zero when idle

- Deployment: Traditional: manual, infrequent, risky releases. Cloud-native: automated CI/CD with multiple production deployments per day.

- Failure Handling: Traditional: manual restart and downtime. Cloud-native: self-healing and automated failover with no human intervention required.

- Resource Waste: Traditional: always-on and chronically over-provisioned. Cloud-native: scales down to zero and pays nothing when idle.

How Cloud-Native Architecture Cuts Your Cloud Costs

Each component of cloud-native architecture eliminates a specific type of waste. Here is exactly how every piece works and what it saves.

1. Autoscaling

What It Does

Autoscaling matches compute to real demand in real time instead of running at 100% provisioned capacity around the clock.

How It Works

• Kubernetes Horizontal Pod Autoscaler scales containers based on CPU usage, memory pressure, or custom business metrics like request queue depth

• Cluster Autoscaler adds cloud nodes when workloads need more capacity and removes them when they go idle

• Scale-to-zero means services consume zero compute and zero cost when not in use

• Event-driven autoscaling ties scaling decisions directly to business metrics for maximum precision

Real Example

An e-commerce platform scales its checkout service from 2 pods to 40 during a flash sale and back to 2 within minutes. With traditional architecture you would pay for 40 servers 24 hours a day just to be safe.

2. Microservices

What It Does

Microservices decouple services so you only pay to scale the bottleneck instead of the entire application.

How It Works

• Your payment processor scales independently from your product catalogue, homepage, and user authentication service

• A traffic spike on search does not force you to add capacity to your notification service or reporting dashboard

• Each service is right-sized to its actual workload with no excess capacity carried anywhere in the system

• Low-traffic services can run on cheap Spot Instances or scale to zero entirely

The Result

Instead of running 20 over-sized servers, you run 50 right-sized containers that collectively consume far less compute and cost significantly less.

3. Serverless Computing

What It Does

Serverless is the most cost-efficient model for event-driven and irregular workloads. You pay nothing when the function is not running.

How It Works

• Billing is per 100 milliseconds of actual execution

• No servers to provision, patch, update, or maintain

• Serverless databases like AWS Aurora Serverless and Azure Cosmos DB Serverless automatically scale capacity to match query volume

• Event-driven APIs built on API Gateway and Lambda provide infinitely scalable backend infrastructure with near-zero idle cost

Impact

Organizations that properly implement serverless patterns report up to 45% infrastructure cost reductions (IEEE, 2025). For image processing, data pipelines, and notifications, serverless is often 10x cheaper than dedicated compute.

4. Infrastructure as Code

What It Does

IaC removes the guesswork from provisioning. Every environment is defined exactly in code with no manual padding or accidental over-sizing.

How It Works

• Infrastructure definitions are version-controlled so every change is tracked, reviewed, and auditable

• Ephemeral test environments are created on demand and destroyed immediately after use

• Reproducible deployments eliminate environment drift which is a common cause of unexpected cost spikes

5. Smart Instance Purchasing

What It Does

Cloud providers offer massive discounts for committed or flexible usage patterns. Most teams never capture them.

Pricing Tiers

On-Demand Instances

Full price. Use for genuinely unpredictable short-lived spikes. Flexible but the most expensive option.

Reserved Instances

Commit to 1 to 3 years and save up to 75% on predictable always-on workloads like databases and core API services.

Savings Plans

A more flexible commitment that saves 40 to 70% across EC2, Lambda, and Fargate without locking into specific instance types.

Spot Instances

Use spare cloud capacity at 70 to 90% discount for fault-tolerant workloads like batch processing, ML training, and video transcoding.

ARM-Based Instances

AWS Graviton and Azure Ampere processors deliver the same performance as x86 at 20 to 40% lower cost and are well-supported by containerized workloads.

FinOps Integration

What It Does

FinOps makes cloud cost optimization a continuous, shared engineering and business responsibility rather than a quarterly finance review.

Core Practices

• Resource tagging: tag every resource by team, project, and environment so every dollar is attributable and accountable

• Real-time dashboards: AWS Cost Explorer, Azure Cost Management, and Google Cloud Cost Management provide live visibility

• Budget alerts: automated notifications when spend approaches defined thresholds prevent surprise bills

• Unit economics: track cost per user, cost per transaction, and cost per API call to connect spend to business value

• Waste rate monitoring: target below 25% waste rate and treat anything above that as an urgent priority

FinOps Foundation reports that cost optimization is the top priority for 50% of cloud practitioners in 2025.

AI-Powered Automated Optimization

What It Does

AIOps and automated cloud optimization platforms handle continuous cost management without any human intervention.

How It Works

• Continuous right-sizing: AI analyzes utilization patterns and automatically adjusts instance sizes

• Workload rebalancing: automatically moves workloads to the cheapest available compute option

• Anomaly detection: ML-powered alerts catch unusual spend spikes before they compound

• Scheduled scale-down: automated policies shut down development and staging environments during nights and weekends

CAST AI data from 2025 shows automated optimization delivers 50%+ cost savings with zero ongoing engineering effort, even for teams that were already manually optimizing.

Hidden Cost Traps

Even after adopting cloud-native architecture, many organizations leave significant money on the table. These are the blind spots that most cloud cost articles skip entirely.

Egress Costs

Moving data out of the cloud is charged per gigabyte. In multi-cloud and hybrid architectures where data moves constantly between providers and regions, egress fees can generate shock bills that dwarf compute costs. The fix is to design data flows at architecture time before you receive the bill.

Toolchain Sprawl

The average engineering team runs 10 or more overlapping DevOps tools including multiple monitoring platforms, redundant CI/CD pipelines, and duplicate SaaS subscriptions. Auditing and consolidating to unified platforms like GitLab or GitHub Enterprise typically recovers significant spend with zero reduction in capability.

Snapshot Creep

Disk snapshots and database backups are created continuously and without automated retention policies they are never deleted. Setting automated expiration policies typically recovers 10 to 15% of storage costs with a single configuration change.

The Lift-and-Shift Trap

Moving a monolith to cloud servers without redesigning it is cloud-hosted, not cloud-native. You pay cloud prices while your architecture operates with on-premises efficiency. The only way to capture cloud economics is to redesign for the cloud.

Wrong Region Pricing

Cloud pricing varies significantly by region. Running workloads in a premium-priced region when a cheaper region serves your users equally well is straightforward avoidable waste. Always compare region pricing explicitly during architecture design.

Security Retrofitting

Bolting security onto your architecture after migration is far more expensive than building it in from the start. DevSecOps practices embedded into CI/CD pipelines reduce security incidents by up to 75%. The cost of a single significant breach dwarfs years of prevention investment.

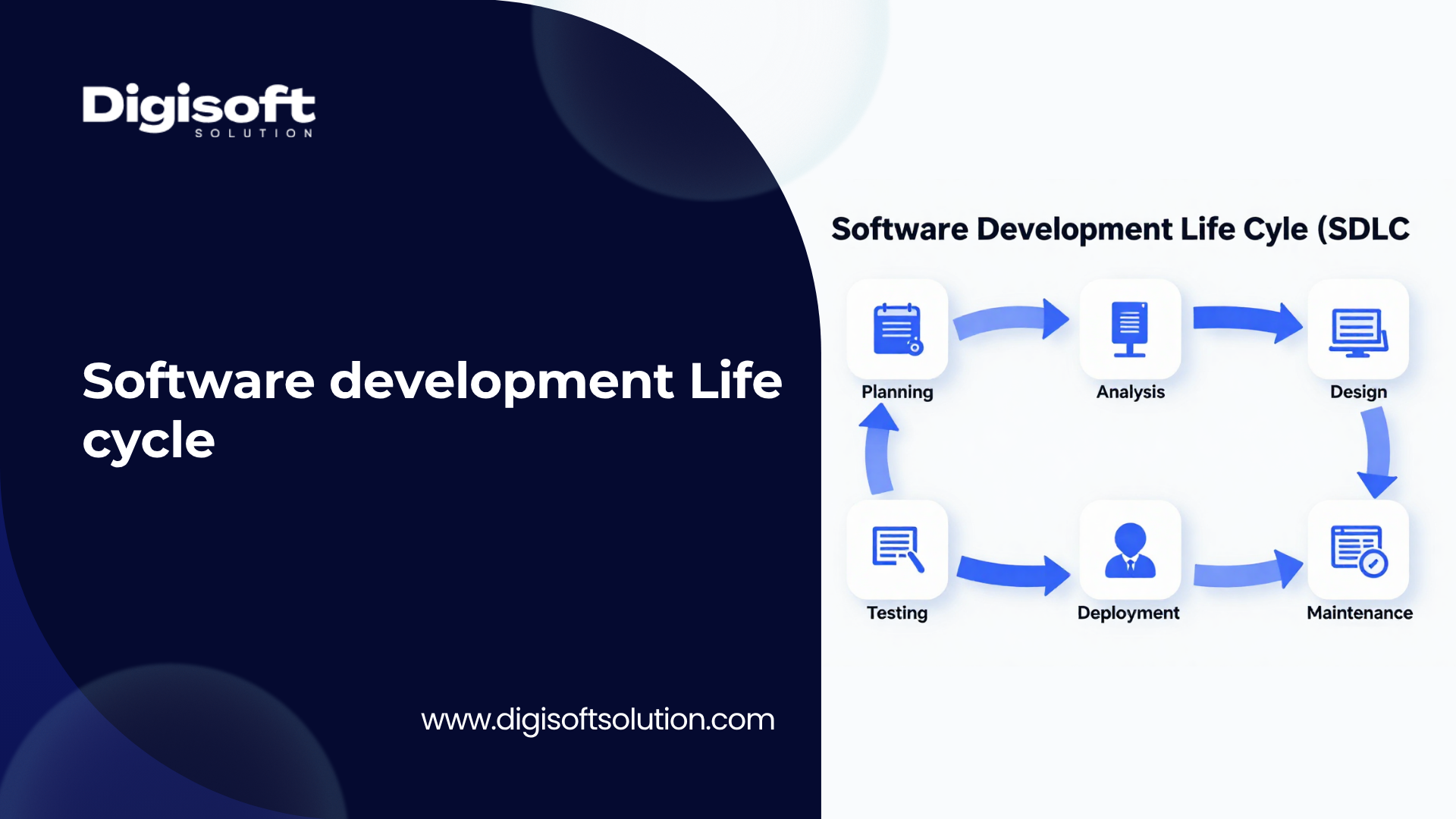

4-Phase Migration Roadmap

You do not need to rebuild everything at once. A phased approach lets you capture meaningful savings quickly while managing risk carefully. Most organizations see their first cost reductions within two weeks of starting Phase 1.

Phase 1: Visibility (Weeks 1 to 2)

Goal

Get complete visibility into where your money is going before changing any architecture. Zero architectural changes required.

Actions

• Enable AWS Cost Explorer, Azure Cost Management, or Google Cloud Cost Management

• Tag every existing resource by team, project, and environment

• Identify and immediately terminate orphaned resources, unused load balancers, and expired snapshots

• Calculate your current waste rate and set a reduction target

• Assign ownership for each spending category

Expected Outcome

10 to 20% cost reduction within 2 weeks with zero architectural changes required.

Phase 2: Containerize (Months 1 to 2)

Goal

Containerize your most resource-intensive services without redesigning them. This is the fastest path to meaningful efficiency improvements.

Actions

• Dockerize your highest-cost services first, prioritized by billing impact

• Deploy to Kubernetes and configure Horizontal Pod Autoscaling with sensible thresholds

• Right-size containers based on observed CPU and memory utilization data rather than estimates

• Enable Cluster Autoscaler to remove idle nodes automatically

• Implement automated scale-down schedules for non-production environments

Expected Outcome

20 to 35% infrastructure cost reduction from right-sizing and idle node elimination.

Phase 3: Microservices and Serverless (Months 3 to 6)

Goal

Decompose the most expensive monolithic components and migrate event-driven workloads to serverless. This is where the largest savings are captured.

Actions

• Identify your highest-cost services and decompose them into independent microservices

• Migrate batch jobs, image processing, event handlers, and notifications to serverless functions

• Implement Infrastructure as Code for all environments and eliminate manual provisioning

• Purchase Reserved Instances or Savings Plans for stable predictable production workloads

• Migrate suitable containerized workloads to ARM-based instances

• Consolidate your toolchain and eliminate overlapping SaaS subscriptions

Expected Outcome

40 to 60% total cost reduction compared to your pre-migration baseline.

Phase 4: Automate and Govern (Ongoing)

Goal

Install the systems and practices that make cost optimization continuous and automatic.

Actions

• Deploy automated optimization tools such as CAST AI or Spot.io for continuous right-sizing

• Establish team-level cost accountability with monthly FinOps review sessions

• Embed cloud cost awareness into engineering onboarding and architecture reviews

• Review and renew commitment-based discounts annually

• Set up anomaly detection alerts to catch unexpected spend spikes within hours, not at month end

Expected Outcome

50%+ ongoing savings sustained indefinitely with minimal continuing engineering effort.\

Digisoft Solution for Cloud-Native Architecture

Digisoft Solution is a full-service software engineering company, founded in 2013. With over 12 years of engineering expertise and a team of 60+ or more skilled engineers, Digisoft Solution specializes in building secure, scalable, cloud-native applications that reduce infrastructure costs and accelerate business growth.

Digisoft Solution is not a generic cloud reseller. The team designs and builds cloud-native systems from the ground up, using the same architecture patterns described in this guide, tailored to each client's specific workloads, technology stack, and cost profile.

What Digisoft Solution Does for Cloud-Native

Cloud-Native Application Development

Digisoft Solution builds API-first, containerized applications with horizontal scalability, identity federation, and secure multipoint access. Every application is designed for cloud-native deployment from day one, not adapted to it after the fact.

- Microservices architecture aligned with Domain-Driven Design and CQRS patterns

- Event-driven, loosely coupled services with resilience engineering and service mesh

- Containerized deployments using Docker and Kubernetes on AWS, Azure, and Google Cloud

- Serverless backends for event-driven workloads and APIs

- Horizontal scalability with autoscaling built into every service from the start

Infrastructure as Code and DevOps

Digisoft Solution implements Infrastructure as Code best practices to eliminate over-provisioning, reduce manual operations, and make every environment reproducible and auditable.

- Terraform-based IaC provisioning for cloud infrastructure across all major providers

- CI/CD pipeline automation using Azure DevOps and GitHub Actions

- Automated testing frameworks with 85%+ automated test coverage

- Backstage-based Internal Developer Platform for self-service IaC provisioning

- DevOps governance with DevEx metrics and golden-path microservice templates

DevSecOps and Security-First Architecture

Security is built into every stage of the development lifecycle at Digisoft Solution, not retrofitted after deployment. This approach reduces security incidents and eliminates one of the largest hidden cloud costs: breach remediation.

- Zero-trust security architecture embedded in CI/CD pipelines

- Automated vulnerability management and threat detection

- SIEM monitoring and regulatory compliance automation

- Claims-based and policy-based authorization middleware

- Encrypted device storage with secure cloud API integration

Legacy Application Modernization

Digisoft Solution has deep expertise in migrating legacy monolithic applications to cloud-native architectures. This is one of the most impactful and risk-prone transitions a business can make, and Digisoft Solution manages the entire process from assessment to production.

- Monolithic to microservices architecture refactoring

- Cloud migration with re-architecting and containerized deployment

- Modular refactoring, API enablement, and cloud readiness preparation

- Migration from legacy .NET Framework to modern .NET cloud-native environments

- Distributed transaction coordination and workflow engine implementation

AI and Intelligent Automation

Digisoft Solution integrates AI-powered automation into cloud-native architectures to drive continuous cost optimization and operational efficiency beyond what manual processes can achieve.

- Azure OpenAI and LLM orchestration with Semantic Kernel

- RAG pipelines with vector database integration for intelligent applications

- AI governance with audit trails and explainability

- Predictive analytics and intelligent workflow automation

- AIOps integration for automated infrastructure optimization and anomaly detection

Managed Services and Continuous Optimization

Digisoft Solution does not hand over a project and disappear. Long-term managed services and continuous optimization are a core part of every engagement.

- Lifecycle-driven maintenance ensuring runtime stability and security governance

- Performance optimization and horizontal scalability monitoring

- Agile feature evolution with 2-week sprint cycles

- Real-time cloud cost monitoring and waste rate reporting

- Proactive identification of cost reduction opportunities across the infrastructure

The Cloud-Native Cost Optimization Toolchain

Start with your cloud provider native tools. They are free and powerful. Add third-party platforms selectively as your maturity and needs grow.

Cost Visibility Tools

AWS Cost Explorer

Real-time spend breakdown, usage patterns, and savings plan recommendations. Free with any AWS account.

Azure Cost Management

Budget alerts, detailed resource tagging, and cross-service cost analysis. Free with any Azure subscription.

Google Cloud Cost Management

Billing dashboards, committed use discount tracking, and budget alerts built into the GCP console.

Infrastructure and Orchestration Tools

Terraform

The most widely used Infrastructure as Code tool. Provision and manage cloud infrastructure across AWS, Azure, and GCP from version-controlled code.

Kubernetes with HPA

Container orchestration with built-in autoscaling. The foundation of any serious cloud-native architecture.

AWS Lambda, Azure Functions, Google Cloud Functions

The three major serverless platforms. All support event-driven compute with per-execution billing and no idle cost.

Automated Optimization Tools

CAST AI

AI-powered continuous right-sizing, Spot Instance automation, and workload optimization. Reports 50%+ cost savings in production environments.

Spot.io by NetApp

Automates the use of Spot and Reserved Instances to maximize savings on compute spend across all major cloud providers.

AWS Graviton and Azure Ampere ARM Instances

20 to 40% lower cost per compute unit compared to x86. Well-supported by containerized workloads and increasingly the default choice for new deployments.

Observability Tools

Datadog

Resource utilization monitoring, anomaly detection, and cost attribution by service and team.

New Relic

Full-stack observability with infrastructure cost monitoring and alerting.

Prometheus and Grafana

Open-source observability stack that is free, widely adopted, and highly customizable for any cloud environment.

What Your Business Actually Gains

Cloud-native architecture does not just reduce your cloud bill. It changes how your entire engineering organization operates and what it is capable of delivering.

Cost Reduction

35 to 60% lower cloud costs through right-sizing, autoscaling, smart instance purchasing, and serverless adoption. Direct, measurable, and permanent.

Deployment Speed

40% faster deployment cycles through CI/CD automation and containerization. Features reach users faster and engineering teams spend less time on manual operations.

Security Posture

75% fewer security incidents through DevSecOps practices embedded in CI/CD pipelines. Breach remediation is one of the largest hidden costs in any organization and this significantly reduces that risk.

Scalability

Handle 10x traffic spikes without pre-buying capacity. Autoscaling means you never have to choose between performance and cost.

Operational Efficiency

80% reduction in operational overhead through automated recovery, scaling, and monitoring. A lean team can manage infrastructure that previously required a dedicated operations department.

Cost Transparency

Every dollar attributed to a team, service, or product line. Accountability drives better architecture decisions faster than any top-down mandate.

Frequently Asked Questions

Is cloud-native only for large enterprises?

No. Startups often benefit the most. Building cloud-native from the beginning means you never have the overbuilt monolith problem to solve later. A team of five can operate infrastructure that previously required 20 people in an on-premises environment.

Do we need to rewrite our entire application?

No. A phased migration is the standard approach. Start by containerizing existing services and adding autoscaling to see meaningful savings within weeks without redesigning anything. Then gradually decompose components into microservices and introduce serverless for new features. There is no requirement to do everything at once.

How does cloud-native handle multi-cloud or hybrid environments?

Infrastructure as Code tools like Terraform are cloud-agnostic and can provision across AWS, Azure, and GCP from the same codebase. Kubernetes runs on any cloud. The main challenge in multi-cloud setups is egress costs between providers, which must be planned at architecture time. Hybrid setups also benefit from cloud-native patterns because containers and IaC work identically on-premises and in the cloud.

How quickly will we see real cost savings?

Phase 1 covering visibility and orphaned resource cleanup typically delivers 10 to 20% savings within two weeks with zero architectural changes. Phase 2 covering containerization and autoscaling delivers an additional 20 to 35% over one to two months. Full optimization across all four phases takes three to six months but the savings compound permanently over time.

What is the biggest risk of going cloud-native?

Microservice sprawl. If you decompose too aggressively without strong governance, you end up with hundreds of poorly documented overlapping services that are harder and more expensive to operate than the original monolith. The mitigation is clear service ownership, centralized observability, and Infrastructure as Code for everything from day one.

Which cloud provider is best for cloud-native?

All three major providers including AWS, Azure, and Google Cloud, have mature full-featured cloud-native ecosystems. AWS has the largest serverless and managed service catalogue. Azure integrates most deeply with Microsoft enterprise tooling. Google Cloud has the most advanced Kubernetes capabilities, which is expected given that Google invented Kubernetes. The right choice depends on your existing stack, team expertise, and vendor relationships.

What if our team does not have cloud-native expertise?

This is the most common reason organizations do not capture the savings available to them. The technical skills exist but the practical challenge is applying them to your specific architecture, workloads, and cost profile. That is exactly where expert guidance accelerates outcomes significantly and reduces the risk of common mistakes.

Digital Transform with Us

Please feel free to share your thoughts and we can discuss it over a cup of coffee.

Kapil Sharma

Kapil Sharma