Table of Content

- What Is an AI Agent? The 2026 Definition

- What Makes an AI Agent Different from Regular Automation

- The Agent Stack: What's Actually Inside

- The 9 Types of AI Agents in 2026

- 1. Simple Reflex Agents

- 2. Model-Based Reflex Agents

- 3. Goal-Based Agents

- 4. Utility-Based Agents

- 5. Learning Agents

- 6. Multi-Agent Systems

- 7. Agentic RAG Agents: The 2026 Enterprise Standard

- 8. Autonomous Agents: Fully Agentic Systems

- 9. Hierarchical and Orchestrator Agents

- Agent Type Quick Reference

- Cross-Cutting Challenges Every Business Faces in 2026

- Governance Before Deployment, Not After

- The Cold Start Problem Across Agent Types

- Cost Architecture Is a First-Class Design Concern

- Security Attack Surfaces Are Multiplying

- Integration With Legacy Systems

- Industry Use Cases

- Healthcare and Life Sciences

- Financial Services

- E-commerce and Retail

- Legal and Compliance

- Manufacturing and Supply Chain

- How to Choose the Right AI Agent Type for Your Business

- Step 1: Classify Your Environment

- Step 2: Define Your Performance Budget

- Step 3: Map Your Data and Tool Access

- Step 4: Plan Your Governance Before Building

- Step 5: Start Narrow and Expand

- The Trends Shaping the Rest of 2026 and Beyond

- Smaller, Specialized Models Will Replace One-Size-Fits-All LLMs for Many Agent Tasks

- Agent-to-Agent Protocols Are Standardizing

- Governance Agents Are Becoming Standard Infrastructure

- Human-in-the-Loop Is Being Redesigned, Not Reduced

- Agentic AI Is Becoming the Competitive Infrastructure Layer

- Choose the Right AI Agent for Your Business Needs with Digisoft Solution

- What Digisoft Solution Delivers

- Industries We Serve

- Conclusion

Digital Transform with Us

Please feel free to share your thoughts and we can discuss it over a cup of coffee.

- 79% of companies now use AI agents in some capacity (PwC AI Agent Survey)

- Market size: $7.84 billion in 2025, projected to exceed $52 billion by 2030 at a 46.3% CAGR

- Gartner predicts 40% of enterprise applications will embed AI agents by end of 2026

- IDC expects AI copilots to be embedded in 80% of enterprise workplace applications by 2026

- Salesforce reports a 282% jump in enterprise AI adoption in recent years

If you are building a product, running operations, or managing customers in 2026, you are probably already surrounded by AI agents even if you do not call them that. The spam filter in your inbox, the chatbot resolving support tickets at 2 a.m., the recommendation engine nudging your users toward checkout: these are all forms of AI agents at work.

But as AI technology has matured, the definition of an AI agent has expanded dramatically. Today's agents do not just respond to inputs. They plan, reason, retrieve real-time data, coordinate with other agents, and execute multi-step workflows across your entire tech stack. The businesses that understand which agent type fits which problem are already pulling ahead.

This guide breaks down every major type of AI agent relevant in 2026, explains how each works technically, details real-world use cases competitors overlook, walks through the hardest deployment challenges you will actually face, and gives you a framework for choosing what is right for your situation. Nothing theoretical: only what works in production.

What Is an AI Agent? The 2026 Definition

What Makes an AI Agent Different from Regular Automation

Most automation tools follow a script. They execute a fixed sequence of steps and stop when the steps are done. AI agents are architecturally different. An AI agent is a software system that can perceive its environment, reason about what to do next, take action using available tools, evaluate the result, and adapt its next move based on what happened.

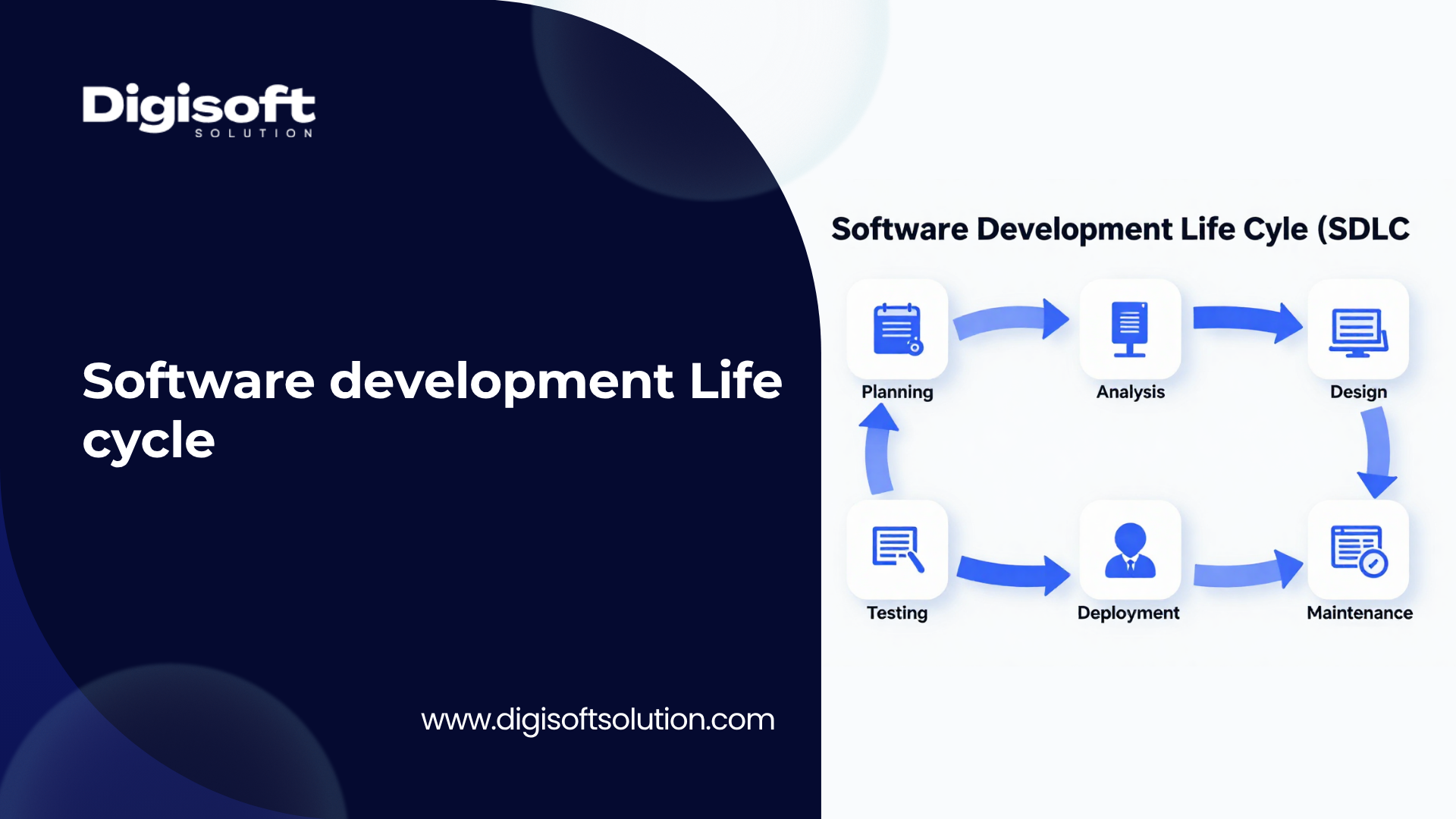

The four-part loop that defines every AI agent is: Sense, Think, Act, and Correct. The agent collects input from users, APIs, databases, files, or sensors. It reasons about what the input means and what to do next. It executes a tool call, API request, database query, or workflow step. Then it evaluates the result and decides whether to loop, escalate, or conclude.

What separates 2026-era agents from earlier chatbots or RPA bots is the combination of large language model reasoning, external tool access, memory systems, and the ability to chain multiple actions together without human instruction at every step. A chatbot answers a question. An AI agent completes a task.

The Agent Stack: What's Actually Inside

Every production-grade AI agent today is built on at least six components working together.

- LLM Core: The reasoning engine. Models like GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, or open-source alternatives like Llama 3.2 drive decision-making

- Tool Layer: APIs, web browsers, code interpreters, databases, CRM systems, and any external service the agent can call

- Memory System: Short-term context (conversation history), long-term storage (vector databases like FAISS, Weaviate, or Pinecone), and episodic memory for learning from past interactions.

- Orchestration Framework: Libraries like LangGraph, Microsoft AutoGen, CrewAI, or LlamaIndex that structure agent behavior, manage tool routing, and handle error recovery

- Retrieval Layer (RAG): The mechanism for pulling relevant, up-to-date information from private knowledge bases or the live web before generating a response

- Governance and Observability: Logging, audit trails, policy guardrails, and human-in-the-loop checkpoints that make agents safe to run in production

Understanding this stack matters because different agent types use these components differently. A simple reflex agent does not use a memory system or LLM core at all. An agentic RAG system depends heavily on the retrieval layer. A multi-agent system orchestrates all six across dozens of specialised agents simultaneously.

The 9 Types of AI Agents in 2026

1. Simple Reflex Agents

Simple reflex agents are the oldest and most straightforward class of AI agent. They operate entirely on condition-action rules: if this input matches this condition, then execute this action. There is no memory, no reasoning, no learning, and no ability to handle anything outside the predefined rule set.

How It Works Technically

The agent reads the current input, matches it against a lookup table of if-then rules, and fires the corresponding action. The process completes in a single pass. There is no state tracking between interactions. If the environment changes in an unexpected way that has no matching rule, the agent either does nothing or executes the wrong action.

Where Businesses Actually Use Them in 2026

- Email spam filters that flag messages based on keyword patterns and sender reputation

- Industrial IoT sensors that trigger shutdown commands when temperature, pressure, or vibration crosses a threshold

- Retail self-checkout systems that flag items when barcode weight does not match expected values

- Network monitoring tools that send alerts when packet loss exceeds a fixed percentage

- Customer service call routing that sends callers to billing, technical support, or sales based on IVR selections

Genuine Benefits

- Fast Decision-Making: Immediate rule-based responses enable microsecond response times with zero LLM inference overhead

- Zero Hallucination Risk: The agent can only do what its rules allow, making outputs fully predictable

- Low Cost to Run: No GPU, no API calls, no compute-intensive inference required

- Audit-Ready: Every action is traceable to a specific rule, making compliance straightforward

Real Deployment Challenges

- Rule explosion: Complex environments require thousands of rules, and maintaining them becomes a full-time job

- Zero adaptability: A single new scenario not covered in the rules breaks the agent entirely

- No contextual understanding: The agent cannot distinguish between two inputs that look similar but mean different things in context

- Hidden failure modes: The agent appears to work until it silently executes the wrong rule in an edge case

When to Use It: Simple reflex agents are the right choice when your environment is fully observable, your conditions are stable and well-defined, and response speed matters more than flexibility.Manufacturing floor monitoring, basic compliance triggers, and fixed-logic routing systems are ideal fits.

2. Model-Based Reflex Agents

Model-based reflex agents extend the simple reflex design by adding internal state, a representation of the world that persists between inputs. This allows the agent to handle environments where not all relevant information is visible at any given moment.

How It Works Technically

The agent maintains an internal model updated continuously as new inputs arrive. When making a decision, it combines the current input with its internal model to infer hidden or missing state, then applies a rule. This two-step process, update model then apply rule, allows it to reason about things it cannot directly observe.

Where Businesses Use Them in 2026

- Autonomous vehicle systems that track surrounding vehicles even when temporarily obscured by other objects

- HVAC management systems that track thermal history to anticipate heating or cooling needs before occupants feel discomfort

- Fraud detection pipelines that maintain user behavioral baselines and flag deviations over time

- Customer service bots that remember earlier messages in a conversation when forming a response to a new question

- Supply chain monitoring agents that track inventory movement history to predict stockout risks

Real Deployment Challenges

- Model drift: The internal model can become stale if not updated frequently enough relative to how fast the environment changes

- Memory overhead: Storing and querying state for millions of concurrent sessions at enterprise scale requires careful infrastructure design

- Still rule-dependent: The reasoning layer is still condition-action, not true planning or optimization, which limits its usefulness for complex tasks

3. Goal-Based Agents

Goal-based agents introduce intentional decision-making. Instead of matching inputs to rules, they evaluate available actions based on whether those actions move the system closer to a defined goal. This means the agent can plan ahead across multiple steps and choose different paths to the same outcome depending on context.

How It Works Technically

The agent is given a goal state and uses search algorithms such as breadth-first search, A*, MCTS, or LLM-guided planning to find action sequences that reach that state. It evaluates each candidate action by predicting its consequences and selecting the path that leads most efficiently to the goal.

Where Businesses Use Them in 2026

- Navigation and logistics: Route optimization agents that plan delivery sequences across hundreds of stops while minimizing fuel and time

- AI sales development reps: Agents that autonomously identify prospects, craft outreach, follow up, and schedule meetings

- Code generation agents: Given a feature requirement as the goal, the agent plans which files to create or modify, writes the code, runs tests, and iterates

- Research automation: Agents that given a question, plan which sources to consult, what data to pull, and how to structure a summary

- Workflow orchestration: Project management agents that break large deliverables into subtasks and assign them across systems or human team members

The Planning Depth Problem

Most articles describe goal-based agents as if planning is free. In practice, planning depth, how many steps ahead the agent reasons before acting, is the single biggest lever controlling both performance and cost. Shallow planning of one to two steps ahead is fast but fails on complex tasks. Deep planning of five or more steps ahead produces better outcomes but multiplies LLM inference costs and latency. Production deployments need to cap planning depth based on task complexity and budget, not just set the agent loose.

4. Utility-Based Agents

Utility-based agents add a preference layer on top of goal-based planning. Rather than simply finding any path to the goal, they evaluate every possible action with a utility function that quantifies how desirable each outcome is. When multiple actions lead to the goal, the agent picks the one that maximizes expected utility.

How It Works Technically

The agent assigns a numerical utility score to each possible action or outcome, considering factors like cost, risk, time, quality, user satisfaction, or business value. It then selects the action that maximizes the expected utility score, often combining probabilistic outcome modeling with optimization algorithms.

Where Businesses Use Them in 2026

- E-commerce recommendation engines: Ranking products by the probability-weighted utility of conversion, basket size, and return likelihood

- Dynamic pricing agents: Continuously adjusting prices based on demand signals, competitor data, inventory levels, and margin targets simultaneously

- Healthcare treatment planning agents: Selecting from multiple valid treatment protocols by modeling expected outcomes, side effect risk, and patient preference weighting

- Financial portfolio agents: Rebalancing assets by optimizing risk-adjusted return across thousands of possible allocation combinations

- Content moderation agents: Weighing the utility of removing borderline content against the cost of false positives for legitimate users

The Hidden Challenge: Utility Function Design

Building the utility function is the hardest part of deploying a utility-based agent. If the function does not perfectly capture what the business actually values, the agent will optimize for the wrong thing. A customer service utility function that maximizes resolution speed without penalizing poor satisfaction scores will produce fast but low-quality interactions. Defining, testing, and iterating on utility functions requires domain experts, data scientists, and product stakeholders working together before a single line of agent code is written.

5. Learning Agents

Learning agents are the most adaptive class. They improve their behavior over time through experience, feedback, and data rather than relying on static rules or pre-programmed utility functions. In 2026, learning agents built on reinforcement learning from human feedback, supervised fine-tuning, and in-context learning from retrieved examples are central to most advanced AI product experiences.

How It Works Technically

A learning agent has four internal subsystems: a learning element that updates the agent's model based on feedback signals, a performance element that executes actions based on the current model, a critic that evaluates performance and generates feedback, and a problem generator that identifies new situations to explore. In practice, this maps to modern ML training pipelines, evaluation frameworks, and human-in-the-loop feedback systems.

Where Businesses Use Them in 2026

- Personalisation engines: Streaming platforms, news apps, and e-commerce stores that continuously learn individual preferences from implicit and explicit signals

- Customer support AI: Agents that identify patterns in unresolved tickets and generate new response strategies without human rule-writing

- Predictive maintenance: Industrial agents that learn equipment failure signatures from sensor data and improve prediction accuracy as more failure events accumulate

- Ad optimization agents: Systems that learn which creative, audience segment, and bid combination maximizes ROAS across millions of impressions in real time

- Security threat detection: Agents that learn normal network behavior and continuously refine their anomaly models as new attack patterns emerge

Cold Start and Feedback Quality

Every learning agent has a cold start problem: it performs poorly when first deployed because it has no experience. You need either a warm-start strategy using pre-collected data, a staged rollout that limits exposure during the learning phase, or explicit human oversight until the agent reaches acceptable performance baselines. Equally important is feedback quality. A learning agent is only as good as the signals it learns from. Noisy, delayed, or biased feedback will teach the agent exactly the wrong behaviors.

6. Multi-Agent Systems

Multi-agent systems place multiple autonomous agents into a shared environment where they collaborate, coordinate, or compete to accomplish goals too large or complex for any single agent. In 2026, multi-agent systems have become the dominant architecture for complex enterprise AI deployments because individual specialized agents outperform generalist single agents on domain-specific subtasks, and because multi-agent systems scale in ways that monolithic agents cannot.

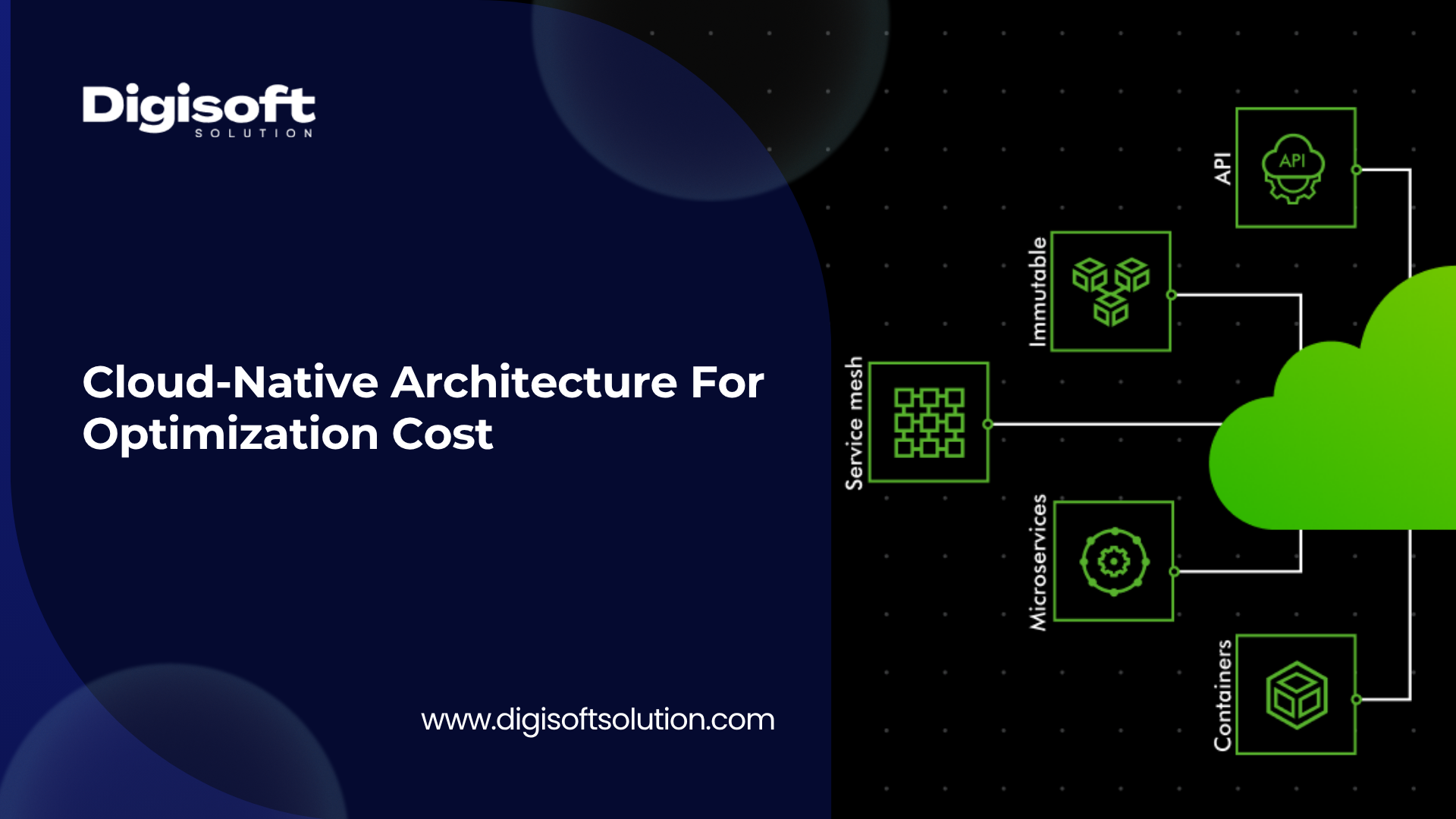

How It Works Technically

In a multi-agent system, a supervisor or orchestrator agent decomposes a high-level goal into subtasks and assigns them to specialized worker agents. Worker agents execute their assigned tasks, report results, and may trigger additional agents if the subtask requires further decomposition. Agents communicate through shared memory, message passing, or structured API calls. The leading frameworks in 2026 include LangGraph for stateful graph-based coordination, Microsoft AutoGen for enterprise-grade pipelines, CrewAI for role-based collaboration, and LlamaIndex AgentWorkflow for document-heavy processing tasks.

Where Businesses Use Them in 2026

- Software development pipelines: Planner agents decompose feature requests, coder agents write implementation, test agents validate, and review agents check for security vulnerabilities, all concurrently

- Market intelligence: Research agents scrape and retrieve data from multiple sources while analysis agents process, compare, and synthesize findings simultaneously

- Enterprise customer support: Tier-1 agents handle common queries while escalation agents route complex issues to specialist agents with access to billing systems, product databases, or compliance records

- Financial reporting: Data extraction agents pull figures from multiple systems while calculation agents compute metrics and formatting agents produce the final document

- Clinical decision support: Research agents retrieve medical literature, verification agents cross-check drug interactions, synthesis agents combine findings with patient history, and governance agents enforce HIPAA-compliant data access

Coordination Overhead Is Real

Multi-agent systems introduce a class of problems that single-agent deployments do not have: coordination overhead, consistency failures, and debugging complexity. When two agents retrieve contradictory information and both send their results to a synthesis agent, the system needs explicit conflict resolution logic or the final output will be wrong. When one agent fails mid-task, the system needs fault-isolation and redistribution logic or the entire workflow stalls. Building production-ready multi-agent systems requires significantly more engineering investment than building individual agents, and teams frequently underestimate this by a factor of three to five.

7. Agentic RAG Agents: The 2026 Enterprise Standard

Agentic Retrieval-Augmented Generation represents the convergence of LLM reasoning with dynamic, iterative information retrieval. Traditional RAG retrieves documents once and generates an answer from them. Agentic RAG changes this: the agent itself decides what to retrieve, when to retrieve again, which tools to call mid-process, and when it has gathered enough grounded information to produce a reliable response.

How It Works Technically

The agent begins by decomposing the query into a retrieval plan. It queries vector databases, SQL systems, APIs, web search, or graph databases based on the query requirements. If the first retrieval pass returns insufficient or contradictory information, the agent reformulates its query and retrieves again. This iterative loop continues until the agent's confidence in its gathered context crosses a threshold. Only then does it generate a final response grounded in verifiable sources. Key components include a vector database for semantic document retrieval, a hybrid search layer combining lexical and vector matching, a reranker to filter retrieved chunks by relevance, and a reasoning loop such as ReAct or Chain-of-Thought that evaluates intermediate retrieval results before deciding next steps.

Why Enterprises Are Moving to Agentic RAG in 2026

Eliminates hallucination in domain-specific Q&A by grounding every claim in retrievable source documents

- Handles complex multi-hop questions that simple RAG fails on, where the answer requires combining information from multiple sources across multiple retrieval steps

- Supports compliance and auditability because every response can be traced back to specific retrieved documents

- Works across fragmented enterprise data by routing queries to the correct database, API, or knowledge base based on query intent

- Adapts to fresh information without requiring model retraining because retrieval happens at inference time

Real Deployment Challenges

- Latency cost: Every additional retrieval step adds latency. In high-volume support chat or real-time search, multiple retrieval loops make the system feel slow. Limit retrieval depth to two or three steps per query for latency-sensitive use cases

- Retrieval quality gates: If the retrieval layer returns poor-quality chunks, the agent's reasoning is grounded in bad information. Heading-aware chunking, metadata filtering, and reranking are non-negotiable in production

- Access control complexity: Enterprise RAG systems must enforce document-level permissions at query time so users only receive information they are authorised to access

- EU AI Act compliance: Organizations deploying RAG systems in regulated EU industries must ensure their retrieval and generation pipelines support auditability and explainability obligations, active from August 2026

8. Autonomous Agents: Fully Agentic Systems

Autonomous agents operate with minimal human intervention across extended tasks. They plan their own workflows, use dozens of tools, manage state across many steps, make decisions independently, and only pause for human input at predefined high-stakes checkpoints. In 2026, autonomous agents are moving from research experiments into production deployments in engineering, operations, and sales.

How It Works Technically

Autonomous agents combine all previous agent capabilities: memory, planning, utility optimization, learning, and tool use. They typically operate within an agentic loop: receive goal, plan action sequence, execute first action, evaluate result, update plan, execute next action, and repeat until the goal is achieved or a human checkpoint is triggered. The checkpoints are predefined by the deploying organization and represent decisions with consequences too significant or irreversible to delegate fully to the agent.

Where Businesses Deploy Them in 2026

- Software engineering: Amazon used autonomous agents to modernize thousands of legacy Java applications, completing in weeks what would have taken years manually

- Legal research automation: Agents that receive a legal question, search case law databases, cross-reference statutes, synthesize findings, and produce a draft memo

- Autonomous SDR agents: Identifying leads from CRM and web data, drafting personalized outreach, scheduling follow-ups, and routing qualified prospects to human salespeople

- Compliance monitoring: Agents that continuously scan regulatory update feeds, compare new requirements against current policies, flag gaps, and draft remediation summaries

- IT operations: Agents that monitor infrastructure, diagnose root causes, implement approved fixes, and escalate issues that require human judgment

The Human-in-the-Loop Design Question

Most competitors discuss autonomous agents as if more autonomy is always better. In 2026, the leading organisations are discovering that the question is not how autonomous can we make this agent, but at which exact decision points should a human be in the loop. Designing explicit human checkpoints for irreversible actions, such as sending emails at scale, committing code to production, or processing payments, prevents catastrophic errors while still delivering efficiency gains for everything else. This hybrid design is what Gartner calls Enterprise Agentic Automation.

9. Hierarchical and Orchestrator Agents

Hierarchical agent systems add a deliberate management layer to multi-agent architectures. An orchestrator agent sits at the top, receives high-level objectives, decomposes them into subtasks, assigns those tasks to specialised worker agents, monitors progress, resolves conflicts between agent outputs, and synthesises the final result. This mirrors how well-functioning human organisations work, with a manager coordinating a team of specialists.

How It Works Technically

The orchestrator uses a Supervisor Agent pattern, which accounts for 37% of agent usage on major platforms according to Databricks' 2026 State of AI Agents report. It maintains a task registry, monitors completion status, handles failures by reassigning tasks, and enforces priority ordering. Worker agents are specialised: they have access only to the tools, data, and permissions their role requires.

Where Businesses Use Them in 2026

- Enterprise data pipelines: Orchestrator manages extraction agents, transformation agents, quality validation agents, and loading agents across a unified ETL workflow

- Content production systems: Orchestrator coordinates research agents, writing agents, editing agents, SEO agents, and publishing agents to produce articles at scale

- Customer lifecycle management: Orchestrator routes customers across acquisition agents, onboarding agents, support agents, retention agents, and upsell agents based on lifecycle stage

- Incident response: Orchestrator coordinates detection agents, diagnosis agents, remediation agents, and communication agents when a production system fails

Agent Type Quick Reference

Use this reference to match your problem type to the right agent architecture before making any technical decisions.

Simple Reflex Agents

Memory: None. Learning: No. Best for: Fast fixed-rule responses in fully observable static environments. Typical use: IoT triggers, spam filters, IVR routing.

Model-Based Reflex Agents

Memory: Internal state. Learning: No. Best for: Partially observable environments where some information is hidden or delayed. Typical use: Fraud detection, HVAC management.

Goal-Based Agents

Memory: State plus goal. Learning: No. Best for: Multi-step planning where the path to an objective is not fixed. Typical use: Route optimization, code generation.

Utility-Based Agents

Memory: State plus utility model. Learning: Partial. Best for: Decisions requiring trade-off optimization across competing objectives. Typical use: Dynamic pricing, product recommendations.

Learning Agents

Memory: Full, continuously updated. Learning: Yes. Best for: Adaptive improvement over time in changing or unpredictable environments. Typical use: Personalisation, ad optimization, predictive maintenance.

Multi-Agent Systems

Memory: Distributed across agents. Learning: Yes. Best for: Large-scale parallel tasks requiring specialist expertise across domains. Typical use: Software development pipelines, clinical decision support.

Agentic RAG Agents

Memory: Vector database plus context window. Learning: Partial. Best for: Knowledge-grounded Q&A and document reasoning over private enterprise data. Typical use: Enterprise search, compliance Q&A.

Autonomous Agents

Memory: Full persistent across sessions. Learning: Yes. Best for: End-to-end task completion across multiple systems with minimal human direction. Typical use: SDR bots, legal research, IT operations.

Hierarchical Orchestrator Agents

Memory: Distributed and centrally managed. Learning: Yes. Best for: Complex enterprise workflows requiring coordination of many specialist agents. Typical use: Data pipelines, content ops, incident response.

Cross-Cutting Challenges Every Business Faces in 2026

Beyond the challenges specific to each agent type, every organization deploying AI agents in 2026 faces a common set of problems. Most competitor articles stop at individual agent limitations. These are the systemic challenges.

Governance Before Deployment, Not After

Research from Economist Impact found that 40% of organizations believe their AI governance program is insufficient. The consequence is significant: companies using AI governance tools get over 12 times more AI projects into production. Governance is not a compliance checkbox. It is the infrastructure that lets you scale agents without losing control. Build audit logging, explainability mechanisms, access controls, and accountability frameworks into your agent architecture from day one.

- Implement role-based access control at the agent tool level, not just at the application level

- Log every tool call, retrieval query, and decision with full context for post-hoc review

- Define escalation thresholds explicitly: which actions require human approval before execution

- Establish versioning and rollback procedures for agent configurations the same way you do for software deployments

The Cold Start Problem Across Agent Types

Any agent that learns from experience starts with no experience. This is the cold start problem, and it affects learning agents, agentic RAG systems, and multi-agent systems alike. Planning your cold start strategy before deploying saves weeks of poor performance in production.

- For learning agents: collect a representative dataset before launch and use supervised pre-training to establish baseline behavior

- For RAG agents: invest in knowledge base quality before going live. Poorly chunked, untagged documents produce poor retrieval results regardless of LLM quality

- For multi-agent systems: test coordination logic on representative task distributions before exposing to real users

Cost Architecture Is a First-Class Design Concern

In 2026, treating agent cost optimization as an afterthought is how organizations end up with $50,000 monthly LLM API bills for workflows that generate $10,000 in value. The leading organizations build economic models into agent architecture from the start.

- Match model size to task complexity: simple classification subtasks do not need a large frontier model. Smaller fine-tuned models cost 95% less and perform comparably on narrow tasks

- Cache frequent retrievals: if 40% of your RAG queries ask similar questions, semantic caching eliminates redundant vector database calls

- Set planning depth limits per task type: cap agentic reasoning steps to what is sufficient, not the maximum allowed

- Monitor cost per successful task completion as a primary KPI, not just accuracy

Security Attack Surfaces Are Multiplying

AI agents expand the attack surface of your systems in ways traditional security frameworks do not cover. Prompt injection attacks, where malicious content in a retrieved document hijacks the agent's behavior, are documented and exploitable. Poisoned RAG knowledge bases can cause agents to produce specific harmful outputs. Agent-to-agent communication channels in multi-agent systems create new vectors for privilege escalation.

- Sanitize and validate all external content before it enters an agent's context window

- Apply least-privilege principles to every agent's tool access: no agent should have access to tools it does not need for its specific role

- Implement anomaly detection on agent behavior patterns, not just on network traffic

- Test agents against adversarial inputs as part of your QA process, not just normal workflows

Integration With Legacy Systems

Most enterprise AI deployments do not replace existing systems. They sit on top of them. Modern agents communicate with legacy systems through orchestration layers that translate agent tool calls into API requests, database queries, or RPA actions. Enterprises adopting hybrid IT stacks can deploy agentic workflows without replacing existing infrastructure, but integration architecture requires explicit engineering investment. Budget for it.

Industry Use Cases

Healthcare and Life Sciences

Most coverage focuses on appointment scheduling and basic symptom checkers. What is actually being deployed in 2026 is significantly more sophisticated.

- Clinical trial protocol analysis: Agentic RAG agents that extract eligibility criteria from trial documents, cross-reference patient records, and flag matches, reducing screening time from weeks to hours

- Pharmacovigilance: Multi-agent systems that continuously monitor adverse event databases, scientific literature, and regulatory bulletins to flag emerging drug safety signals

- Surgical workflow orchestration: Hierarchical agents that coordinate pre-operative checklist completion, OR scheduling, equipment preparation, and post-operative care handoff across systems

- Medical coding and billing: Learning agents trained on ICD-10 and CPT coding patterns that improve accuracy over time and flag claims likely to be denied before submission

Financial Services

- Real-time KYC: Multi-agent systems that simultaneously query identity verification APIs, sanctions lists, PEP databases, and adverse media sources to complete due diligence in seconds rather than days

- Earnings analysis automation: Agentic RAG agents that retrieve SEC filings, earnings transcripts, analyst reports, and market data to produce structured analysis documents for portfolio managers

- Credit underwriting: Utility-based agents that evaluate loan applications by weighing hundreds of data signals across traditional credit data, alternative data sources, and behavioral indicators

- Regulatory reporting: Autonomous agents that extract data from trading systems, apply regulatory calculation rules, and draft FINRA, SEC, or MiFID II reports with full audit trails

E-commerce and Retail

- Assortment planning: Multi-agent systems that coordinate demand forecasting agents, supplier pricing agents, and merchandising agents to optimize inventory investment across SKUs

- Return fraud detection: Learning agents that identify return abuse patterns across customer, product, and logistics dimensions and flag high-risk returns for manual review

- Personalized bundle creation: Utility-based agents that construct product bundles dynamically based on individual purchase history, margin targets, and real-time inventory levels

- Supplier negotiation support: Goal-based agents that analyze contract terms, benchmark against market rates, and draft counter-proposals based on negotiation objectives

Legal and Compliance

- Contract review at scale: Agentic RAG agents that extract obligations, deadlines, and risk clauses from contract documents and compare them against internal policy standards

- Regulatory change monitoring: Autonomous agents that track regulatory update feeds across multiple jurisdictions and map new requirements to internal policy gaps

- Litigation research: Goal-based agents that given a legal question, plan a research sequence across case law, statutory text, and secondary sources and produce cited draft memos

- GDPR and data privacy auditing: Multi-agent systems that inventory data flows, classify personal data, identify retention violations, and generate remediation plans

Manufacturing and Supply Chain

- Predictive quality control: Learning agents that analyze sensor data streams from production lines to predict defect probability before a component completes manufacturing

- Supplier risk monitoring: Multi-agent systems that continuously evaluate supplier financial health, geopolitical exposure, and delivery performance to trigger diversification recommendations

- Maintenance work order optimization: Utility-based agents that balance equipment criticality, parts availability, technician scheduling, and production impact to sequence maintenance work orders

- Energy consumption optimisation: Autonomous agents that adjust production scheduling based on real-time energy pricing, equipment efficiency ratings, and demand forecasts

How to Choose the Right AI Agent Type for Your Business

The most expensive mistake businesses make with AI agents in 2026 is choosing an architecture before defining the problem. The following decision framework helps you sequence the conversation correctly.

Step 1: Classify Your Environment

- Is the environment fully observable? Start with simple or model-based reflex agents

- Is the environment partially observable? You need at a minimum a model-based architecture

- Does the task require making trade-offs between competing objectives? You need utility-based architecture

- Does the task require retrieving and reasoning over large volumes of private or specialized knowledge? You need agentic RAG

- Does the task span multiple systems, require parallel execution, or need specialized expertise in different domains? You need multi-agent or hierarchical architecture

- Does the task need to run end-to-end with minimal human intervention? You need autonomous agent design with defined human checkpoints

Step 2: Define Your Performance Budget

- What is the maximum acceptable latency? Sub-second responses rule out deep planning and multi-step RAG

- What is the maximum acceptable cost per task completion? Higher architecture complexity multiplies inference costs

- What is the minimum acceptable accuracy? Higher accuracy requirements justify more expensive retrieval and verification layers

- What is the failure cost? Higher failure costs justify more human oversight and more conservative automation scope

Step 3: Map Your Data and Tool Access

- What data does the agent need? Is it accessible via API, database, or file system?

- Is the data structured, semi-structured, or unstructured? Unstructured data almost always requires RAG

- What tools does the agent need to execute actions? Are they available as APIs? Do they require authentication and authorization logic?

- Are there regulatory or security constraints on what the agent can access or store?

Step 4: Plan Your Governance Before Building

- Which actions are reversible (safe to automate) and which are irreversible (require human approval)?

- Who is accountable when the agent produces a wrong output or takes a harmful action?

- What audit trail format does your compliance team require?

- What is the escalation path when the agent encounters a scenario outside its training distribution?

Step 5: Start Narrow and Expand

Every successful AI agent deployment in 2026 started with one well-defined use case with measurable success criteria. Not a platform. Not a strategy. One task. Once that task is performing reliably in production with real users and real consequences, you have the organizational knowledge and trust to expand scope. Organizations that tried to deploy enterprise-wide agentic platforms from day one almost universally failed or drastically scaled back.

The Trends Shaping the Rest of 2026 and Beyond

Smaller, Specialized Models Will Replace One-Size-Fits-All LLMs for Many Agent Tasks

Ai trend toward domain-specific, smaller reasoning models is accelerating. Instead of routing every agent subtask through a large general-purpose model, enterprises are deploying fine-tuned smaller models for specific agent roles. A coding agent, a compliance classification agent, and a customer intent detection agent each run on models tuned for their exact function, at a fraction of the cost and latency of a general model.

Agent-to-Agent Protocols Are Standardizing

The emerging Agent-to-Agent protocol and the Model Context Protocol are establishing standards for how agents discover each other, communicate task assignments, and report results. This is the microservices moment for AI agents. Just as REST APIs standardized how services communicate on the web, these protocols are standardizing agent communication, making it possible to compose complex multi-agent systems from independently deployed, interoperable agent services.

Governance Agents Are Becoming Standard Infrastructure

In 2026, leading organizations are deploying dedicated governance agents that monitor other AI systems for policy violations, detect anomalous agent behavior, and enforce audit requirements automatically. Governance is no longer a layer applied after the fact. It is an agent in the system. Organizations using governance tooling get over 12 times more AI projects into production because stakeholders trust the outputs.

Human-in-the-Loop Is Being Redesigned, Not Reduced

The most important shift in agent design thinking in 2026 is moving from asking how do we reduce human involvement to asking at exactly which decision points is human judgment irreplaceable. The answer is consistently at high-stakes, irreversible, or ethically complex junctures. Elsewhere, automation is appropriate. This reframe produces systems that are both more efficient and more trustworthy than either full automation or traditional human-supervised workflows.

Agentic AI Is Becoming the Competitive Infrastructure Layer

By the end of 2026, agentic AI will have moved from competitive advantage to competitive table stakes in several industries. Financial services firms without autonomous compliance monitoring agents will face higher regulatory costs. E-commerce platforms without personalization and dynamic pricing agents will lose margin to competitors that have them. SaaS companies without AI agents embedded in their core product workflows will lose to products that have them. The window for early-mover advantage is closing. The window for avoiding competitive disadvantage is what remains.

Choose the Right AI Agent for Your Business Needs with Digisoft Solution

Building AI agents that actually work in production requires more than picking the right architecture from a list. It requires a partner who understands your industry context, data infrastructure, integration constraints, and governance requirements. That is exactly what Digisoft Solution brings to every engagement.

What Digisoft Solution Delivers

- AI Agent Strategy and Architecture Consulting: We start with your business problem, not a technology agenda. Our team maps your use case, environment, and data to the right agent type and builds the architecture specification before a line of code is written

- Custom Agent Development: From simple reflex agents for IoT monitoring to full multi-agent orchestration systems for enterprise workflows, we build production-ready agents tailored to your tech stack, data sources, and operational requirements

- Agentic RAG Implementation: We design and deploy knowledge retrieval systems grounded in your private enterprise data, with proper chunking, hybrid search, access control, and observability built in from the start

- Multi-Agent System Engineering: We architect and build supervisor-worker agent hierarchies using frameworks like LangGraph, AutoGen, and CrewAI, with full coordination logic, fault isolation, and governance layers

- Integration With Existing Systems: Our agents connect to your CRMs, ERPs, databases, legacy APIs, and third-party platforms through secure orchestration layers without requiring you to replace working infrastructure

- Governance, Security, and Compliance Engineering: We build audit logging, role-based tool access, human-in-the-loop checkpoints, and escalation frameworks into every agent deployment so you can scale confidently in regulated environments

- Ongoing Optimisation and Monitoring: Post-deployment, we monitor agent performance, identify degradation patterns, tune retrieval systems, and iterate on agent behavior based on real production data

Industries We Serve

Healthcare and Life Sciences, Financial Services, E-commerce and Retail, Manufacturing, Legal and Compliance, and SaaS Platforms.

Conclusion

AI agents in 2026 are not a single technology. They are a spectrum of architectures, each suited to specific problem shapes, data environments, and operational requirements. Simple reflex agents still solve important problems cheaply and reliably. Multi-agent systems are enabling enterprise workflows that were not previously automatable. Agentic RAG is making enterprise knowledge accessible and trustworthy in ways vanilla chatbots never could. Autonomous agents are beginning to execute multi-day tasks across complex systems with minimal human direction.

The businesses that get this right in 2026 are the ones that start with the problem, not the technology. They define their environment, classify their task, establish their governance requirements, and then select the agent architecture that fits. They start with one well-defined use case, prove value in production, and expand from a foundation of demonstrated results.

The businesses that struggle are the ones that chase the most sophisticated architecture because it sounds impressive, deploy without governance planning, and underestimate the engineering investment required to go from demo to production.

You now have the complete technical picture of every major agent type, their real deployment challenges, their industry use cases, and the framework to choose correctly. The question is what you build first.

Digital Transform with Us

Please feel free to share your thoughts and we can discuss it over a cup of coffee.

Kapil Sharma

Kapil Sharma